Contents

1 Introduction

In the post on matrices in Codea I mentionned that an affine transformation (things like rotation, scaling, translation) could be specified by giving four vectors. In this post I want to examine that a little more closely for the special case of a rotation. That is to say: how can I encode a rotation in ? Clearly four vectors are enough but also clearly too many (if for no other reason than that four vectors is enough to specify all affine transformations). Can we get that number down?

2 Encoding Schemes

It is worth at the outset considering what a good encoding scheme for rotations should look like. Here's a list of what I think are reasonable characteristics.

-

It should be "computer friendly". By this I mean that it should use standard data structures: numbers and arrays; equivalently, vectors.

-

It should be "human friendly". By this I mean that if I have a rotation in mind then I should be able (in theory) to compute its representation.

These first two might well be incompatible, in which case we would choose two encodings near either end of the spectrum and a dictionary for translating between them.

-

The most common operations should be straightforward to implement.

What does one want to do with rotations? Here's a short list:

-

Compose two rotations,

-

Apply a rotation to a vector,

-

Invert a rotation.

-

3 Rotations

We actually need to start with a more basic question: what is a rotation on ?

Think about this for a minute. It may be intuitively obvious, but if you think so then try writing down a description, or describe it to someone else. Be sure that you don't include anything that definitely is not a rotation.

In situations like this, the best thing is to return to a place where you are sure of what is what. In this case, rotations of the plane are pretty unambiguous. Stick a nail through a sheet of paper at the "origin", then rotations are what you do when you move the paper about that nail without deforming it. (Better get permission from the table owner before doing this.)

How can we generalise this to three dimensions? Fixing the origin is obvious and easy. But is that all that we should fix? A rotation of the plane moves the whole plane, so a rotation of space should (potentially) move the whole space so only the origin should be held fixed. A rotation of the plane moves two directions, so a rotation of space should (potentially) move just two directions so a rotation should fix a whole line (through the origin, and which would depend on the rotation). Which is right?

(As an insight into mathematics, let me offer the opinion that actually it might be that neither is particularly "right" and that both have some merit in which case the important thing is to know which one is being used in a given circumstance.)

Before deciding, let us consider the other characteristic. This is that a rotation should not "deform" space. To make sense of this we need a notion of length and angle so we need to consider not just space but space with the dot (or inner) product. Then we require that a rotation preserve lengths and angles, which is equivalent to requiring that it preserve the dot product of two vectors.

Now comes a bit of magic. It turns out that a linear transformation of three dimensional space which preserves the inner product has to have an invariant line1. That is, there is a line which is fixed by the transformation. It might not be that the individual points on the line are fixed, but they stay on the line. In fact, there are two options for what happens on this line (since distances are fixed): either points are fixed or the line flips about the origin (mathematically, ). Once one line is fixed, the orthogonal plane is also fixed (again, not pointwise). What happens on this plane will either be a rotation or a reflection.

1If you know a bit of linear algebra, this is because it has to have at least one real eigenvalue.

Now we want to disallow reflections, those are a bit different. But we need to be careful as if our invariant line is inverted then that counts towards it being a reflection, though it might not be a reflection if there is a different line that we could have chosen that was left alone.

So we end up with the following characterisation: a rotation preserves the dot product and has an "axis" which is fixed pointwise. On the corresponding orthogonal plane it is a 2D rotation.

Note that we didn't need to resolve our question about the "right" definition of rotations. However, if we moved up another dimension then we would have something to do there.

4 Describing Rotations

Now that we know what a rotation is we can think about the problem of describing one. The definition actually contains a description: we need an axis and a rotation on the orthogonal plane. An axis can be described by giving a vector along it, and we may as well make this a unit vector. A planar rotation can be represented by a matrix.

The first obvious simplification is to note that a planar rotation is completely determined by its angle. So we need four pieces of information: an axis in space (given by three numbers) and an angle.

The first thing to observe about this description is that it is not minimal. To specify an axis we give a unit vector in space. This is a point on a sphere. To give a point on a sphere we actually only need to give two pieces of information. For example we can give the lattitude and longitude to completely specify a point on a sphere. So we could reduce our description even further to require only three pieces of information: angle of rotation and lattitude and longitude of the axis. But this description is problematic. We don't just want good descriptions of individual rotations. We want to be able to give good descriptions that vary nicely as we vary the rotation. The precise conditions for this are a little complicated (involving calculus) but the idea is that we don't want to have continuous families of descriptions of the same axis. In the lattitude and longitude picture then we have this at the poles: at the poles then the longitude parameter can be varied without changing the fact that we are standing at a pole.

It is possible to show that this must happen in general. If we assume a slightly stronger condition on the parametrisation (that the derivative of the parametrisation is always full rank) then it is possible to show that there can be no global parametrisation of the sphere.

So we use three parameters to describe a point on the sphere to avoid this problem and get a clean description of the sphere.

5 A Good Description

Let us consider this description in some detail. The next thing to point out is that there is more redundancy. All rotations of angle are the same, whatever the axis. And are the same as rotations of angle , , …. Also a rotation of angle, say about the axis, say, means: look down at the –plane and rotate it. Equally we could look up from and describe the same rotation as a rotation of angle .

We don't want to remove redundancy purely for the sake of it but where it interferes with either the use or understanding of the encoding. So let us turn to that now.

One of the most important things to be able to do with rotations is to compose them. Let us try that with two rotations.

We'll start with the simplest non-trivial situation: half rotations about axes. Let us write for , for , and for and consider half rotation about each of these axes. Such a rotation is simple to describe: it leaves the given axis alone and is a reflection on each of the other two. That is, a rotation of angle around has the effect , , and . Let's write these rotations as , , and . So what does do? That's "rotate around and then rotate around " (we apply transformations from right to left). Rotating around does . Then rotating around does . So the combination is . This is . Hence our first rule is:

Another obvious rule is rotating about the same axis. Here it is clear that .

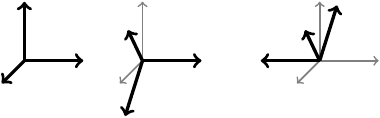

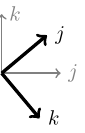

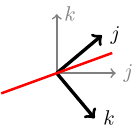

Now let's try something a little more complicated. Let's rotate by an arbitrary angle around one axis, say around , followed by a rotation of angle around . That is, . What does this do? This is simple enough that we can draw it.

General nonsense says that this is again a rotation about some axis. The axis is the line "left alone". We can actually figure out where that line must be from this picture. The –axis ends up "flipped". So any line that protrudes out from the –plane (i.e. has non-trivial –component) will end up in the opposite half of space. So the axis must lie in the –plane. Let's draw just what the transformation does to the –plane.

From this picture, we can see that what happens is that the and vectors are reflected in a line. That line is the bisector of where starts and ends up.

So the axis is . The rotation angle is again , whence this rotation is:

Now let's try something a little more complicated. A rotation of about the –axis followed by a rotation about the –axis. Where do we end up? General nonsense says that this is a rotation about some axis. The matrices for these are:

Their product is:

We want to know how to describe this as a rotation about some axis. The axis will be its "invariant vector". That is, such that , or . We can find that vector using elementary linear algebra. We look at the matrix:

Knowing that has a non-trivial solution, we can see that if we write a solution vector as then we have (from the top line):

while from the middle line we have:

Substituting in, we find that:

We can simplify this a little by noting that the double angle formulae imply that:

So our invariant line is along the vector:

or, equivalently:

This isn't a unit vector so isn't right for an axis, but before we normalise it let us work out the angle of the new rotation. Looking at the two matrices for the simple rotations we see that the sum of the diagonal entries is and . This is true in general, so if we write the new angle as it will satisfy:

The right-hand side simplifies using double angle formulae as follows:

The left-hand side simplifies as:

Hence (upto a sign ambiguity), the new angle is related to the old ones by the formula:

Half angles are proving to be rather important! Although it isn't half angles that keep turning up, it is their sines and cosines. So instead of recording the angle and the axis maybe we can get away with recording the sine and cosine of the half angle together with the axis. Note that we can reconstruct the matrix from the sine and cosine of the half angles since the matrix only needs to know the sine and cosine of the full angle and we get that from the double angle formulae:

This seems as though we are adding in another piece of information as we now record five numbers: . However, although more information it is more useful in that the cosine and sine of the half angle is an easier starting point than the angle.

Expressed in these terms, our initial rules are:

The final rule looks quite simple except for the fact that we haven't renormalised the axis. If we ignore that, we get:

I put a question mark in there as I don't know what the sine of the half angle is. I could work it out, but I'd like to see if I can avoid it. That last formula is quite suggestive. Let me write it slightly differently. By complete abuse of notation, let me identify a unit vector in with a half rotation about that axis. Thus we use to also mean the rotation . With this notation, our first rule reads . Putting this in to the above formula (this is where the real abuse of notation comes in) we get:

The thing to notice about this is that never appears except that is next to it, similarly always comes along with . So if we are entertaining not normalising our axes (after all, is it really necessary?) we could write as . Does this work? Let's try it with our rules.

The one we've left out this time is the second formula. In this one, let and . Then the axis becomes which is fine. But the half angle is so we need to recover the product . As is a unit vector, we can get this from . Thus our second rule is:

6 The Product Rule

Let's look again at the rule:

This looks a lot like the "product rule" for distributing multiplication over addition:

Indeed, if we write the comma as a plus, we get precisely that:

This case dealt with the situation where the two rotations have orthogonal axes. What about the opposite where the rotations have the same axis? Here we had:

Can we make that fit the above pattern? We want to write:

This works providing we accept that the "product" of two parallel axes should be the negative of their dot product.

So the product of two parallel axes is the negative of their dot product, and the product of two orthogonal axes is … what? For and it was . Is there a general operation on vectors that for and spits out ? There is: the cross product. So for orthogonal axes we would expect to get the cross product.

Now given any two vectors, say and , with we can write where is orthogonal to . Since multiplication ought to distribute over addition we would expect the following rule:

This simplifies since and , whence:

whence

Now none of this is a proof. We have no reason for believing that multiplication really does distribute over addition in this way. Also we have generalised from some very specific examples to the general case. Nonetheless, it is evidence for the following conjecture.

Conjecture Let be a rotation of angle about unit axis and a rotation of angle about unit axis . Let be their composition and let be its angle of rotation about axis .

For let and . Then:

This rule certainly subsumes all of our previous rules, but for a proof we need to show that this works for any rotations. There is a geometric argument that says that it is enough to show this under certain assumptions on the axes, but it will be more useful for later if we work out the transformation matrix starting from the cosine of the half angle and the scaled axis.

So we start with a rotation with data . The assumption is that is the cosine of the half angle and has been scaled by sine of the half angle. Note that under those assumptions,

What is the resultant transformation? Let be an arbitrary vector. Since rotations are linear, we can split into a piece along the axis and a piece orthogonal to it:

The part along the axis stays the same. The part orthogonal rotates by the given angle. To specify this rotation we need two vectors in the orthogonal plane. Assuming that we weren't unlucky in our choice we already have one of them: the orthogonal part of . We can get another using cross products:

This isn't quite the vector we'll use. For a start, we can simplify it:

More importantly, it's the wrong length. We need our two orthogonal vectors to have the same length. That is, we want to have the same length as . Since is orthogonal to the length of their cross product is the product of their lengths. Thus is a factor of out. So we take:

for our third vector.

The rotation rotates by angle towards . Thus the total transformation is:

Now let us recall the double angle formulae:

and substitute in:

In there we have a term:

Recall that , whence this simplifies to . This leaves us with:

At first sight, this is not very enlightening. At second sight, it at least justifies the fact that we are specifying a rotation using the cosine of the half angle and a certain scale factor of the angle: only and appear in this formula from the rotation. It is also a reasonably concise formula: there are no complicated cosines or sines. But still that doesn't practically help us resolve our conjecture since composing this formula with another such will be quite messy. So while we could do it and try to rearrange to get the expected formula, we'll take a short cut.

Remember that we introduced some dodgy notation earlier in which we identified a unit vector with a half rotation about that axis. This fits with our new additive notation since a half rotation has angle and so the half angle of a half rotation is , whence we're identifying the unit vector with . So it's looking more reasonable by the minute! (Though still not justified.)

Let's see what happens under our multiplicative formula when the first rotation is of this form:

Still not very enlightening. But if we remember from earlier this isn't enough. When we computed then the result was a rotation of angle but the axis had mysteriously only rotated half as much as we wanted (this is where we got the inkling that half angles would be important). So we need to apply the rotation again. Or at least, apply some other rotation. The difference between the special case, of , and the above is that in our special case the axes were orthogonal. In the above that isn't (necessarily) the case and so we've ended up with a non-trivial angle: we have in the "angle" part. If we simply rotate again by the same angle about the same axis we'll have the same issue. We need some way to get rid of that term. The obvious way to do it is to rotate about since that will introduce a term to cancel out. But if we do rotate the same angle but about we simply cancel out the rotation that we've already done. We need a cunning plan.

The cunning plan is to remember that rotations do not commute. So applying the new rotation on the left has a different effect to applying it on the right.

Let's see if this cunning plan is the outlier2.

2I.e. actually works. Unlike all of the other cunning plans in history.

Let's write:

so that we want to compute:

Then:

which is looking very promising.

We just need to deal with that last term: . This is orthogonal to and to . So long as is not parallel to (and if it were this term would be zero so we wouldn't be worrying about it), a vector orthogonal to and to is . Thus there is some number such that:

To find we can take dot products of everything in site with to get:

The left hand side is a "triple product", , and it is invariant under cyclic permutation of its factors. So we get:

The length of is the length of times by the length of the part of orthogonal to . Thus:

Whence and

Substituting back in,

Thus we have shown the following.

Theorem Let be a rotation with representation . Then for a vector we have:

This is almost enough to prove our conjecture. What we have is that if we have two rotations then (with the obvious notation):

What we need is:

The point here is that the multiplications are being applied in different orders. If we have three numbers, say , and wish to find their product then we can multiply them in two ways (without changing their order): first multiply and and then multiply this product by ; or multiply and and then multiply this product by . We write these as and . That multiplication of numbers is associative says that it doesn't matter which route we took. We need to know that our new multiplication is similarly associative so that we can rebracket multiplications to suit our purposes. Then our conjecture would follow from our theorem.

Except that we do need one more thing. We also need to examine the interaction of the operation with multiplication.

7 Quaternions

Our "objects of interest" are of the form "number and vector". To correspond to a rotation, these need to satisfy some condition (namely that ). But our multiplication formula makes sense without that condition, so for the moment let's ignore it. Thus we are studying things of the form "number and vector" with a strange multiplication given by the formula:

In this case, "vector" means "–vector". A "number and vector" is thus a –vector. So we can simplify our notation by working in and with –vectors. We'll use letters in the neighbourhood of for –vectors. We can promote a number to a –vector by the identification and a –vector to a –vector by . Thus .

What we want to establish are the properties of with this new multiplication. To see what we are aiming for, consider multiplication of numbers. This satisfies quite a few nice properties:

-

It is associative:

-

It is commutative:

-

It has a unit:

-

Non-zero numbers have inverses:

-

It distributes over addition: ,

Let's examine these for our new multiplication on . In actual fact, the best to start with is distributivity. This is because the formula for the multiplication involves lots of additions so it will be nice to know that we can split those up.

Let and write them as , , and . Consider :

A similar argument establishes .

The next property that we shall tackle is the unit. We want such that . Looking at the formula for the multiplication, we want such that:

Looking at that, we want . This has to hold for any . Taking , we get whence . Then we must have . Substituting in, we see that this does work. Hence the unit is . (We also need to check that this works when multiplied on the right. This is straightforward.)

What about inverses? In this case, for (and we might need some non-zero condition here), we want such that:

How do we get that ? Well, is orthogonal to both and so getting is impossible unless . To do this, we need and parallel. That is, for some . Then to get we must have . Looking at the number part, we want to have . Substituting in, we get , whence .

Note that is the dot product of with itself when considered as a –vector, and is thus non-zero if and only if is non-zero. Hence if is non-zero it has a multiplicative inverse and that inverse is given by:

Notice from the above that if and we write as then . Then . In particular, if then . The condition for to represent a rotation is so is potentially a useful thing to know about.

Before turning to associativity, let us deal with commutativity. This fails, as is easily seen by example:

But this is okay. We're using some of these vectors to represent rotations and we want multiplication to correspond to composition and composition of rotations is not commutative.

And so, at last, to associativity. Using distributivity of multiplication over addition we can reduce the problem to one where each of the terms is either a "pure number" or a "pure vector".

If one of them is a "pure number" then we can reduce the triple product to a binary product. This is because multiplication by a pure number corresponds to scalar multiplication:

and from the formula for multiplication, it is clear that:

(And similarly for multiplication on the right.) Thus multiplication is associative if one of the terms is a pure number.

We therefore just need to consider the case of pure vectors:

The pure number terms are the same since the triple product is invariant under cyclic permutation:

So we just need to look at the vector parts.

Again using distributivity over addition and scalar multiplication, we can split it into cases where vectors are equal or orthogonal, and are of unit length.

-

orthogonal to .

The vector part of simplifies to . Moreover, forms an oriented orthogonal basis.

-

orthogonal to both.

Then is (up to sign) and so the vector part of is .

We have so that part of the second vanishes, and then as is (up to sign) , is (up to sign) and the cross product also vanishes.

Thus both sides have vector part .

-

.

Then so the vector term of is . Looking at , we see that and so we are also left with .

-

.

Then . The dot product term in the second vanishes, and , whence .

-

-

.

In this case the vector part of the first term is just .

-

orthogonal to .

The dot product part of the second term is then zero, leaving just . As and are orthogonal, is an oriented orthogonal basis for . Then , whence we have as the vector part of the second term.

-

.

The cross product in the second term vanishes, and the dot product term is .

-

This exhausts all the possibilities and associativity is shown.

There is one last property that we want to show. This is to relate to . It is a standard exercise to show that , so since differs from simply by a scalar, this shows that (the case where one is zero is trivial).

We therefore have a multiplication on that is associative, not commutative, unital, distributes over addition and scalar multiplication, and has multiplicative inverses for non-zero vectors. There is also an involution.

Definition together with the above structure is called the space of quaternions, and written .

( was already taken by the rationals.)

8 Conclusion

So a quaternion is a –vector where we know about multiplication. Unit quaternions, that is quaternions with , define rotations on . The implementation of this is to take a –vector, , and promote it to a quaternion. Then to apply a quaternion compute . Composition of rotations corresponds to multiplication of quaternions since:

Knowing the angle and axis, we can easily get the quaternion as . Knowing the quaternion, we can get the matrix by computing its effect on the standard basis vectors: has the effect:

So if we write , we get the following as columns of the matrix:

The trickiest part is to go from the matrix to the quaternion. The angle comes from the trace. Recall that if is a rotation then the trace of , the sum of the diagonal entries, is . Taking the trace of the matrix from the quaternion above, we get:

Up to sign, this gives us . Then we can read off using various entries of the matrix:

Note that we only get the quaternion up to a sign ambiguity.

9 Loose Ends

There are two loose ends to tie up:

-

What about a rotation of angle ?

-

How many representations do we have per genuine rotation?

The first is easily dealt with. A rotation of angle can be about any axis so in the "angle and axis" representation we have for any unit vector . But when we translate this into quaternions, we need to multiply by . So our representation for a rotation of angle is .

The second is not quite so simple. The fact that we keep dividing angles by actually means that we end up with two representations per rotation. If we forgot that a rotation of got us back where we started then we might be tempted to encode a rotation of as which maps to . And in fact, that works: if then so this is another encoding of the trivial rotation.

More generally, and encode the same rotation since:

In technical terms the unit quaternions provide a double cover of the space of rotations. This only proves problematic if working globally with rotations. If one restricts to a small patch of axes and angles then this issue introduces no difficulties: given a particular quaternion with rotation , there is a correspondence between quaternions near and rotations near .

Where one needs to be aware of this is if one takes a path of rotations that "goes all the way around". The corresponding path of quaternions will only go half way around.

This doubling is related to the half angles and the fact that when we rotate an axis by a rotation we actually only rotate it by half the angle we thought we were rotating it by. Thus the unit quaternions are really acting not on points but on points with spin and when we rotate a point with spin all the way around the circle it, the axis only rotates half as much.

To go further in this vein would lead us to talk of spinors and fermions. Rather than going there, let me end with an alternative view of this doubling. Imagine attaching your point of interest by a ribbon to a "point at infinity". Now rotate it a full circle around the origin (with the rotation only affecting the ribbon by moving its end point, and at the crossing point we can lift the ribbon to let the point pass underneath). The ribbon is twisted. Do it again. The ribbon is still twisted. But now it is possible to untwist the ribbon without moving its endpoints.

Try it.

10 Why Bother?

The above explains what the quaternions are and aims to justify their role as an encoding scheme for rotations of –space. However, it is somewhat light on why one would use them and how to best do so.

The "Why?" question is comparative. The assumption is that one wants to use rotations in some fashion and so one needs an encoding scheme. Thus "Why use quaternions?" is really "Why use quaternions as opposed to XYZ?". Let's compare a few different schemes:

-

Quaternions

-

Matrices

-

Angle and axis

-

Rotations about the major axes

Here are some salient facts:

-

Number of pieces of information to specify a rotation:

-

Quaternions: numbers

-

Matrices: numbers

-

Angle and axis: numbers

-

Rotations about the major axes: numbers

-

-

Computation of composition:

-

Quaternions: multiplications, additions, assignments

-

Matrices: multiplications, additions, assignments

-

Angle and axis: Complicated! Involves cosine and sine of the angles involved.

-

Rotations about the major axes: Complicated! Involves cosine and sine of the angles involved.

-

-

Computation of effect:

-

Quaternions: multiplications, additions, assignments (in a lazy implementation)

-

Matrices: multiplications, additions, assignments.

-

Angle and axis: Involves sine and cosine of the angle involved.

-

Rotations about the major axes: Involves sine and cosine of the angles involved.

-

-

Robustness:

-

Quaternions: Very robust; any non-zero quaternion can be rescaled to a unit quaternion so a minor variation in the numbers can easily be corrected.

-

Matrices: Not robust; it is possible to retract "all matrices" (well, half of all invertible matrices to be precise) onto the rotation matrices but the standard method for doing so is complicated and involves eigenvectors.

-

Angle and axis: Very robust; any non-zero vector defines an axis.

-

Rotations about the major axes: Very robust.

-

-

Ambient space:

-

Quaternions: ambient space of unit quaternions is all quaternions, a skew-field.

-

Matrices: ambient space of rotation matrices is all matrices, this is an algebra.

-

Angle and axis: ambient space of axes is –space, a vector space.

-

Rotations about the major axes: no ambient space.

-

The ambient space is an important point. Rotations themselves have some structure but other than composition the structure is hard to use. Embedding them in some ambient space allows us access to all the structure of that ambient space. The catch is that using it might take us out of the space of rotations, but if there is a simple way to get back again then it can still be worth doing.

As an example, consider the problem of defining a path of rotations. Suppose we want to get from to , but have no particular requirements on the path. With quaternions we can plan as follows: represent these as and and assume (without loss of generality) that . Then in we can take the path . This goes from at to at . Unfortunately, it probably does not consist of unit quaternions (and thus rotations). Fortunately, we can simply renormalise as we go along to fix this. (The assumption that ensures that we never go through the origin.) Doing the same thing with matrices is far more complicated: the projection from all suitable matrices to rotation matrices is more complicated to implement, and the space of matrices where this projection is defined is not as nice as "all non-zero quaternions".

Therefore, quaternions beat all other representations except in the application of a quaternion to a vector where matrices are better3.

3This is because matrices are designed for being applied to vectors.

So the answer to the question "Why should I use quaternions for rotations?" is that if you intend to do anything more than just apply the rotation, quaternions make life easier and more robust.

11 How To Use Quaternions

At a very basic level, quaternions are easy to use. Once you have a library (such as mine) that implements the standard quaternionic structure, all the tools are there for using quaternions to represent rotations.

Quaternions are simply an encoding scheme for rotations so when using quaternions one should always break down the problem into two:

-

What do I want to do to the rotations?

-

How do I do that using quaternions?

In other words, a conceptual and a practical part. Moreover, once the conceptual is sorted out the practical side is generally quite straightforward. So the "How?" of quaternions theoretically should not be a difficult question.

However, what happens in practice rarely follows the theoretical. And whilst quaternions themselves should present no conceptual issues, their use often serves to highlight conceptual problems with rotations themselves. So let's take a couple of uses of rotations that go a bit beyond the basics and see how to encode them using quaternions.

The two examples are the following:

-

Use

touchevents to rotate the view. -

Use the iPad's orientation information to rotate the view.

In both cases, we shall work by adjusting the arguments to the camera function. We shall start with the frame set up so that we are looking at the origin from a point along the positive –axis with the –axis as "up". To give us something to look at we put a mesh at the origin.

11.1 Moving By Touch

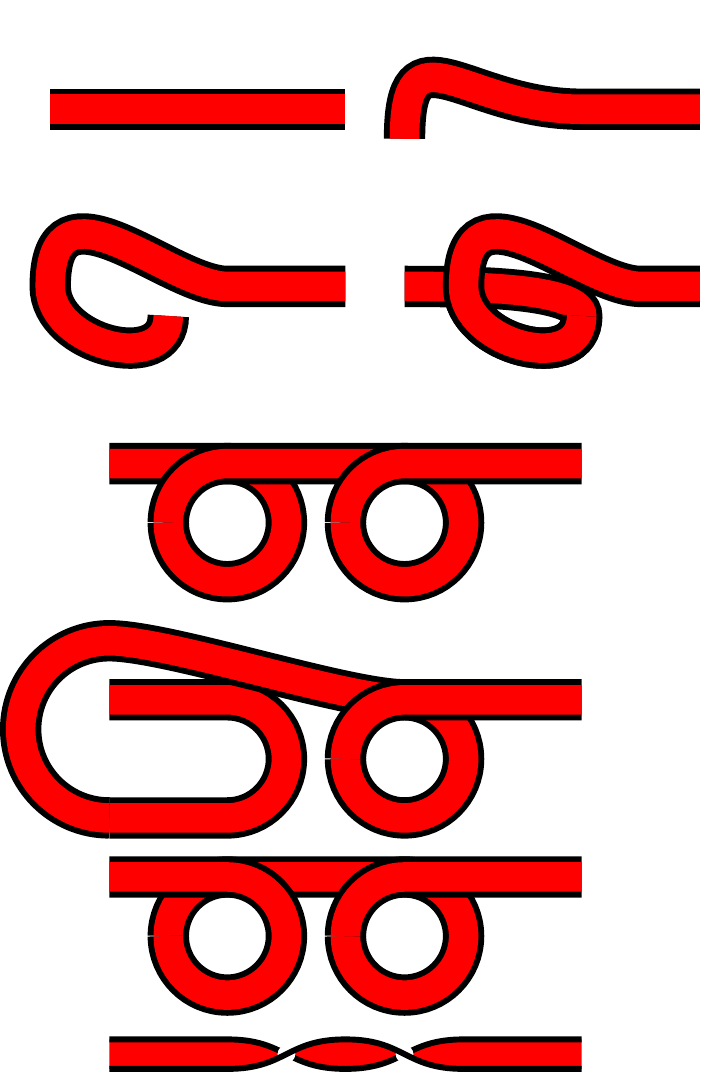

We're looking at the origin and want to rotate the scene by touching the screen. There are various ways to make this work; the following method is based on imagining that there is a transparent sphere between the camera and what it is looking at. When we touch the screen of the iPad it is as if we move that sphere around.

We shall assume that the sphere is roughly the size of the iPad screen. So when you touch the iPad screen, you are touching a point on the sphere above the point you think you are touching. Providing the camera is "far" from the sphere, we can use orthogonal projection to find this point (if the camera is "near" we should use a stereographic projection but complete accuracy is not a high priority here).

We touch the screen at . This is relative to the bottom left of the screen, so we adjust its origin. We also normalise so that the sphere is of unit radius (this is because we are in search of a radius so our calculations are "scale invariant"). Thus we set where is the centre of the screen and the radius of the sphere (whichever is the least of the width or height of the screen). Now, our calculations will go a bit wrong if we get too close to the "edge" of the sphere so we only proceed if . Our point on the sphere is then .

We now move to a new point. As before, we shift and scale this point, and let's write for the result. As before, we set .

The problem is now to specify a rotation (in terms of a quaternion) that takes the point to the point . As stated, this is not uniquely determined. We could arbitrarily rotate around before doing the rotation to . So we add in the constraint that it should move space as little as possible. This means that we want the rotation in the plane of and , so our axis is orthogonal to and . The way to generate a vector orthogonal to two other vectors is to take their cross product so we set .

The naïve way to proceed would be to normalise this axis, then figure out the angle, and feed that into the quaternion machine to find the rotation. However, we don't need to do all of that. If we let be the angle between and and let be the unit vector in the direction of then it turns out that . Now, we actually want but we're not far off. We also have . Now the double angle formula gives us that , thus we can set (we can take the positive square root as it is a safe bet that is quite small). The other double angle formula says that so .

Hence our quaternion is: where .

Note the distinct absence of cosines and sines. The most complicated operation being square roots.

An alternative method would be to take the bisector of and . This is the renormalisation of . Let's call this . The point of that is that the angle between and is so , moreover the cross product of and is in the same direction as that of and but with length . This passes off the computation of the square root from the computation of the angle to the renormalisation of . However, we can be a little sneaky, at the expense of a little accuracy. Instead of computing the bisector of and , we can compute the midpoint of and , say . So long as everything is small, the point on the sphere above will be roughly the bisector of and . Then our quaternion is .

The last thing to note is that we actually want the inverse of this quaternion. This is because we are actually applying our transformation to the camera and not to the world inside. Thus we are not actually turning the sphere, rather we are moving ourselves around on (or relative to) the sphere's surface.

To conclude this section, let's extract a useful nugget. The problem is to rotate space so that a line, say , ends up at, say, . The strategy is as follows. Pick unit vectors, along and along . Compute . If it is non-zero, renormalise to . If not, let be an arbitrary vector orthogonal to . Then the quaternion for the rotation is .

11.2 Moving By iPad

One of the great things about an iPad is the ability to pick it up and move it. In Codea 1.4, we can partially detect how an iPad is being held by examining the Gravity vector. With Codea 1.5 we also get access to the RotationRate.

Let's start with just Codea 1.4. The task here is to rotate the coordinate system so that the –axis is always true vertical. This is not sufficiently specified, so to make it so we also require the –axis to lie in the iPad screen. There will be certain orientations where this is still not sufficiently precise (namely, when the iPad is held horizontal) but for all else it will do.

Assuming that the –axis is not parallel to the gravity vector, we can proceed as before since we want to move the –axis to the gravity vector. Let us write for the gravity vector, then we compute the bisector, say , as the renormalisation of . Then and .

Once again we need the inverse rotation.

This simply rotates the –axis to where we want it. We also need to rotate around the –axis to get the –axis into the iPad screen. To do this, we need to know the vector in the screen that is orthogonal to the gravity vector. This is (up to scale) . So we rotate to get the –axis to point in this direction, using the above method: set , normalise, add , normalise, and then get the quaternion which will be (assuming we storing the result in ).

Now here's where it gets complicated. We have two rotations to apply. The first thing to note is that the order in which we apply them matters. The second thing to note is that we've computed each one with respect to the standard reference frame. But once we apply one rotation we need to ensure that the second is with respect to the new reference frame.

Let's rotate the –axis first. Let be the resultant quaternion. Now represents a rotation of space and it has been designed so that it takes the model's frame of reference to the screen's frame of reference. That is, is where the model's –axis will end up on screen.

(I've sneaked in some notation there. Often when something like quaternions acts on something different, like vectors, we write the action simply like multiplication: . But in this case that is ambiguous since we it makes sense to multiply a quaternion by a vector by thinking of the vector as a quaternion. To avoid this ambiguity, we use the alternative exponential notation for action: . Thus .)

The second rotation is designed to take the –axis to be parallel to the gravity vector. We're applying this after the first one. The key point here is that –axis that we are working with is the model's –axis. This isn't the current –axis, which is the screen's –axis. Rather it is . So our second rotation takes to the gravity vector. (Or rather, since we want gravity to be downwards.)

11.3 Combining the Two

We now have two sources of rotation: the tilt of the iPad and the touch data. We want to put the two together. But in which order?

To do this we need to work out what the user will expect. My assumption is that the user will expect that when they turn the object using touch, then they are genuinely turning the object. However, when tilting the iPad they are turning their view of the object. So the touch data needs to be "innermost". That is to say, our total rotation needs to be .

But there is a snag here. The actual touch data is relative to the screen. That is, when the user touches the screen then the new information is relative to the outermost set of coordinates. So we need to transform it into the innermost by conjugating it by the gravity rotation. Thus when the user touches the screen, we need to update the rotation matrix by .

Now notice what happens when we put that together with (with being the "old" rotation):

So is actually being applied relative to the screen coordinates as it should. The point is that the used in updating is then "frozen" at the time of updating. Then if the iPad is tilted further, so that changes, the above simplification no longer holds. Thus is always relative to the screen coordinates that were in effect when the touch occurred.

11.4 Using Rotation Rate

With Codea 1.5 comes the RotationRate vector. Although a vector, it represents a rotation. The three components are the rotations that the iPad felt around its major axes. Technically, these are Tait-Bryan angles because they use three different axes, though they are sometimes referred to as Euler angles or yaw, pitch, and roll.

The units of RotationRate are radians per second. Thus we can convert each angle to a quaternion rotating around the given axis. This is the "instantaneous" rotation and is relative to the current screen coordinates, so we need to keep a running total to keep track of how the iPad moves. Thus each frame we need to do:

where, for example, .

One obvious question is as to the order in which to multiply the rotations, since the order matters. The best way to figure this out is to test it: try all six possibilities. Have Codea compute all six possibilities and to display the resulting quaternions. Then place the iPad in a known orientation and start the program. Rotate the iPad freely and wildly (making sure not just to rotate around the principal axes) and return it to the known state. The one that is closest to is the right order.

My tests show that is right.

However, as it is cumulative, inaccuracies will build up and so it should not be relied upon to give a truly accurate portrayal of the orientation of the iPad.

A more robust solution is to rotate according to RotationRate and then correct using Gravity. This will ensure that the vertical is correct, whilst giving a reasonable rotation in the horizontal plane.

12 An Example Program

Below is a simple program that uses quaternions to control the camera. It uses my Quaternion library. I put all of my libraries into a single project which I then import in to a project if it needs some of them. To avoid importing all of the libraries if I just need a few I have an import function for loading only the necessary libraries. If you just want the Quaternion library and don't want to bother with my import functions, you need to delete the line import.libraries.Quaternion = function() at the start, and the last end from the end. In the main program itself, remove the line import.library({"Quaternion"}).

The program displays a pyramidal mesh which should seem "fixed" in space as you rotation and tilt the iPad. You can also move it by touching the screen.

Much of the detail is hidden in the Quaternion library.

displayMode(FULLSCREEN)

supportedOrientations(PORTRAIT_ANY)

function setup()

-- Import the quaternion functions

-- (Delete if not using the import facility)

import.library({"Quaternion"})

-- Initial camera parameters

eye = vec3(0,0,20)

look = vec3(0,0,0)

up = vec3(0,1,0)

-- Define a simple mesh

m = mesh()

m.vertices = {

vec3(0,0,0),

vec3(1,0,0),

vec3(0,1,0),

vec3(0,0,0),

vec3(0,1,0),

vec3(0,0,1),

vec3(0,0,0),

vec3(0,0,1),

vec3(1,0,0),

vec3(1,0,0),

vec3(0,1,0),

vec3(0,0,1)

}

m.colors = {

color(255, 255, 255, 255),

color(255, 0, 0, 255),

color(0, 255, 0, 255),

color(255, 255, 255, 255),

color(0, 255, 0, 255),

color(0, 0, 255, 255),

color(255, 255, 255, 255),

color(0, 0, 255, 255),

color(255, 0, 0, 255),

color(255, 0, 0, 255),

color(0, 255, 0, 255),

color(0, 0, 255, 255)

}

-- q holds the rotation due to touch

q = Quaternion(1,0,0,0)

-- qr holds the rotation from RotationRate

qr = Quaternion(1,0,0,0)

end

function draw()

-- update qr

qr:updateReferenceFrame()

-- get the gravitational rotation

local gq = qr:Gravity()

-- multiply by the touch rotation and conjugate

gq = (gq*q)^""

-- adapt the camera positions

local u = up^gq

local e = eye^gq

local l = look^gq

background(0, 0, 0, 255)

perspective(10,WIDTH/HEIGHT)

camera(e.x,e.y,e.z,l.x,l.y,l.z,u.x,u.y,u.z)

m:draw()

end

function touched(touch)

-- only if we've moved

if touch.state ~= BEGAN then

-- convert touch previous and current positions to points

-- relative to a unit circle

local r = math.min(HEIGHT,WIDTH)/2

local o = vec2(WIDTH,HEIGHT)/2

local s = vec2(touch.prevX,touch.prevY) - o

s = s/r

local e = vec2(touch.x,touch.y) - o

e = e/r

local ls = s:lenSqr()

local le = e:lenSqr()

-- so long as we're in the circle and have moved

if ls < .9 and le < .9 and e ~= s then

-- raise points to sphere

local S = vec3(s.x,s.y,math.sqrt(1 - ls))

local E = vec3(e.x,e.y,math.sqrt(1 - le))

-- Compute rotation

local qa = S:rotateTo(E)

local gq = qr:Gravity()

-- Conjugate by gravity and add to current rotation

q = gq^"" * qa * gq * q

end

end

end

13 The Quaternion Library

The Quaternion library defines a class for quaternions with quaternionic operations as the methods. A quaternion can be specified in one of four ways: by passing four numbers, a single number and a –vector, a –vector, or another instance of a Quaternion (which will be cloned). The initialisation function doesn't do any serious checking and just looks at the number of arguments to decide which is being used.

Internally, the actual quaternion is stored as a vec4.

There are some simple tests (assuming that q and qq have been initialised as Quaternions):

-

q:is_zero(): tests ifqis the zeroQuaternion. Note that this tests if each entry is zero. -

q:is_real(): a quaternion is real if it is of the form . -

q:is_imaginary(): a quaternion is imaginary if it is of the form . -

q:is_eq(qq): tests for equality. This tests if each entry is equal, not ifqandqqare pointers (in lua) to the same instance of the class.This is bound to the

==operator so thatif q == qq then ... endworks as mathematical equality.

Then there are all the mathematical that manipulate quaternions. Note that these always return new objects, none modify the object itself.

-

q:dot(qq): computes the dot (inner) product of two quaternions.This used to be bound to the

..operator (and the code is still in the library but commented out). I removed this once I wanted to be able to typeset quaternions. -

q:len(): computes the length of a quaternion, which is the same as the length of it viewed as a –vector. -

q:lenSqr(): computes the square of the length, which is a bit cheaper than the length and often good enough.(Note: this used to be called

lensqbutlenSqris what is used for the variousvecobjects in Codea.) -

q:normalise(),q:normalize(): normalises the quaternion to unit length. -

q:scale(l): scale the quaternion by factorl. -

q:add(qq): add two quaternions, or add a number to a quaternion. -

q:subtract(qq): subtraction one quaternion or number from a quaternion. -

q:multiplyRight(qq): multiplication on the right:q * qq. -

q:multiplyLeft(qq): multiplication on the left:qq * q.Recall that for quaternions, the order of multiplication matters.

-

q:conjugate(),q:co(): conjugation ofq(the operation , for rotations this computes the inverse). Thecoform is to ease typing. -

q:reciprocal(): returns the multiplicative inverse, . It tests for the quaternion being non-zero, but simply complains if it is. -

q:power(n): this computes the th power of the quaternion for an integer. -

q:real(): returns the real part (first component) of the quaternion. -

q:vector(): returns the vector part (last three components) of the quaternion as avec3object. -

q:tostring(): this converts the quaternion to a string for "pretty printing". Note that it might not be a faithful representation so shouldn't be used for serialising the quaternion. -

q:tomatrix(): this converts the quaternion to the corresponding matrix. The matrix is actually since that is the type of matrix used by Codea for representing transformations of the display.

It is possible to use infix operations with Quaternion objects. In particular, the following all make sense where q and qq are Quaternions and n is a number.

-

q + qq,n + q,q + n -

q - qq,n - q,q - n -

q * qq,n * q,q * n -

q / qq,n / q,q / n -

q^nfornan integer;q^qqcomputesqq * q * (1/qq);q^""(in fact, anything "else") computes the conjugate ofq(I use""as it is a single key press in Codea) -

s .. q,q .. s

The next set of functions relate to rotating the frame of reference in accordance with the Gravity and RotationRate vectors. These are almost not instance methods in that it almost makes no sense to call them on a particular instance of the Quaternion class. The catch is that the RotationRate information must be processed only once per frame. It has to keep a running total of the rotations and updating it twice would mean that that frame's rotations got added twice. I could fix this by hooking into the draw function and adding the relevant function at the start, but I am reluctant to mess around with draw functions at the library level for various reasons. If it were only the user that ever used this directly there would not be a problem: it is a simple case of caveat progamator. But it might be that someone implements a library on top of the Quaternion library that uses this functionality internally, and maybe more than one library, in which case the libraries would have to figure out themselves that the updating had already been taken care of.

A simpler solution seemed to be to allow the RotationRate information to be stored in a local variable as well as a global one. So a library creates its own store for the RotationRate and a programmer can use the global one. This does mean that the RotationRate might get processed several times unnecessarily, but if this becomes the sticking point in a program then it is probably at the stage where everything is already written and conflicts as described above can be resolved amicably.

-

q:Gravity(): The intention here is to rotate the –axis to line up with gravity. The input quaternion,q, is taken to be an initial rotation that is to be applied. The intention being that this is the rotation coming from theRotationRateinformation. Ifqis not given, in that this function is called asQuaternion.Gravity(), then the global accumulation ofRotationRateis used. -

q:updateReferenceFrame(): This processes theRotationRatedata and adds it toq, or to the global storage. Note that this modifiesqdirectly. -

q:ReferenceFrame(): This is really for getting the global storage of the rotation, but if called with an actualQuaternionobject then it just returns that object.

Then there are some more creation functions. These are not instance methods.

-

Quaternion.Rotation(a,...): This creates a quaternion corresponding to a rotation specified by an angle and an axis. The axis can be specified as avec3object or three numbers. It does not have to be of unit length. -

Quaternion.unit(): This returns a newQuaternionequal (mathematically) to .

The vec3 class has some extra bits added to it to make it interact well with quaternions. Here, v is assumed to be a vec3.

-

v:toQuaternion(): returns the quaternion . -

v:applyQuaternion(q): applies the quaternion to the vector as a rotation (the quaternion is assumed to be of unit length).The

^operator is extended to allow for this to be implemented asv^q. -

v:rotateTo(u): this returns the quaternion needed to rotatevto be in line withu.